In audits, governance is not evaluated by intent, policies, or architecture diagrams. It

is evaluated by evidence.

For AI systems — especially those using large language models — this evidence is often

fragmented, implicit, or entirely missing. Teams know how their systems behave, but cannot

demonstrate how decisions are controlled, reviewed, or logged in a way auditors can

verify.

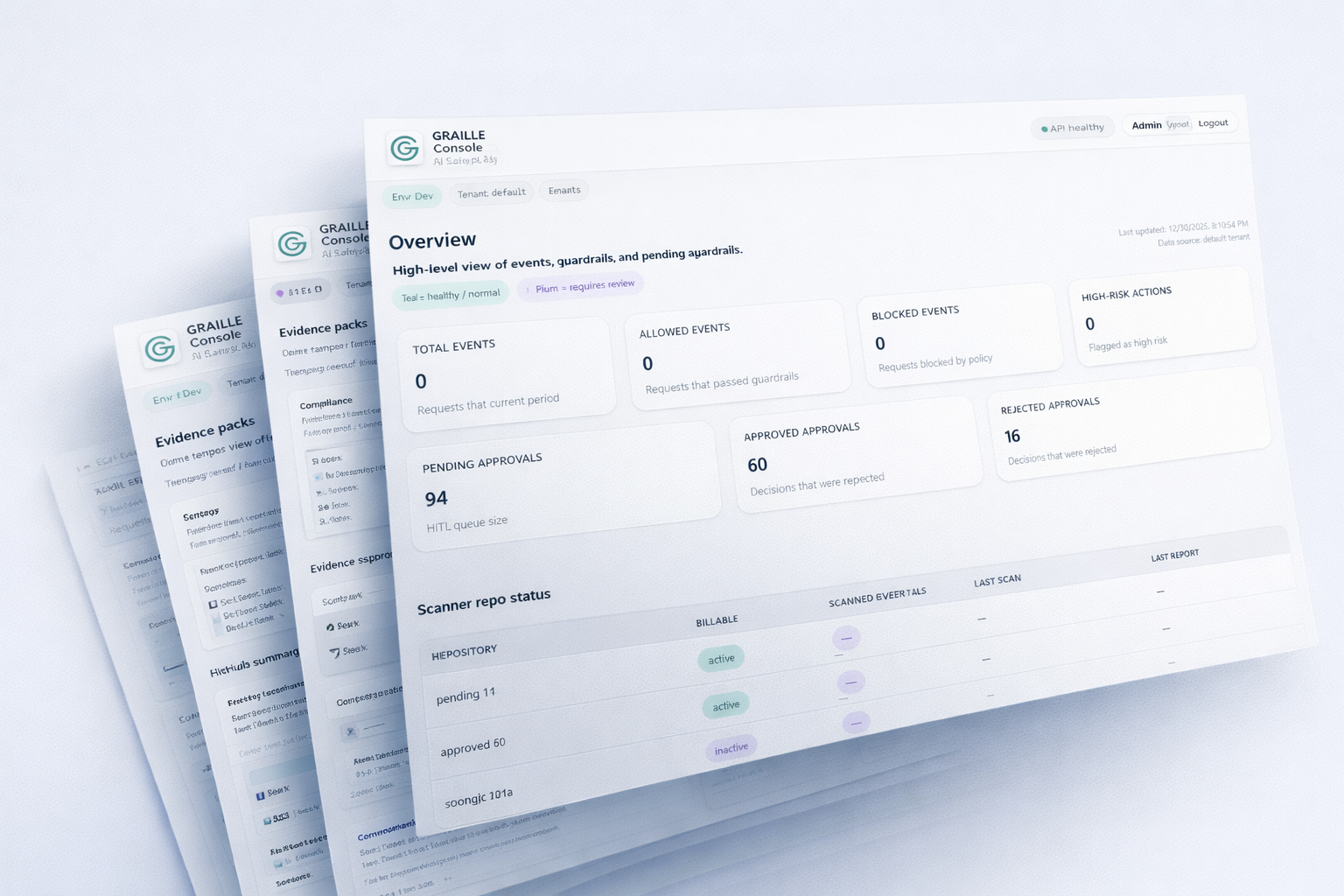

Graille is designed to make AI governance auditable before it is enforceable.

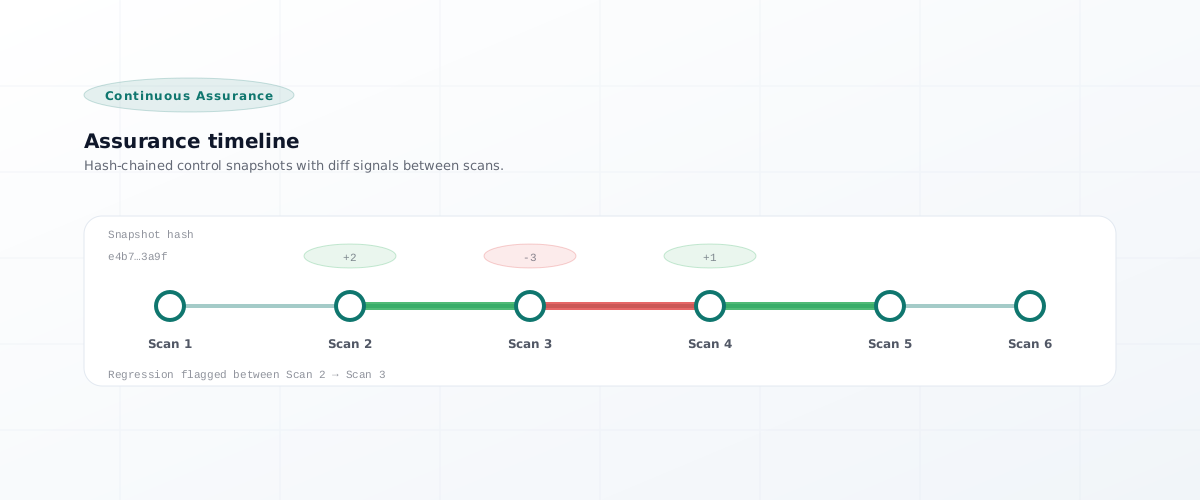

- Assess

Define audit expectations before enforcement exists.

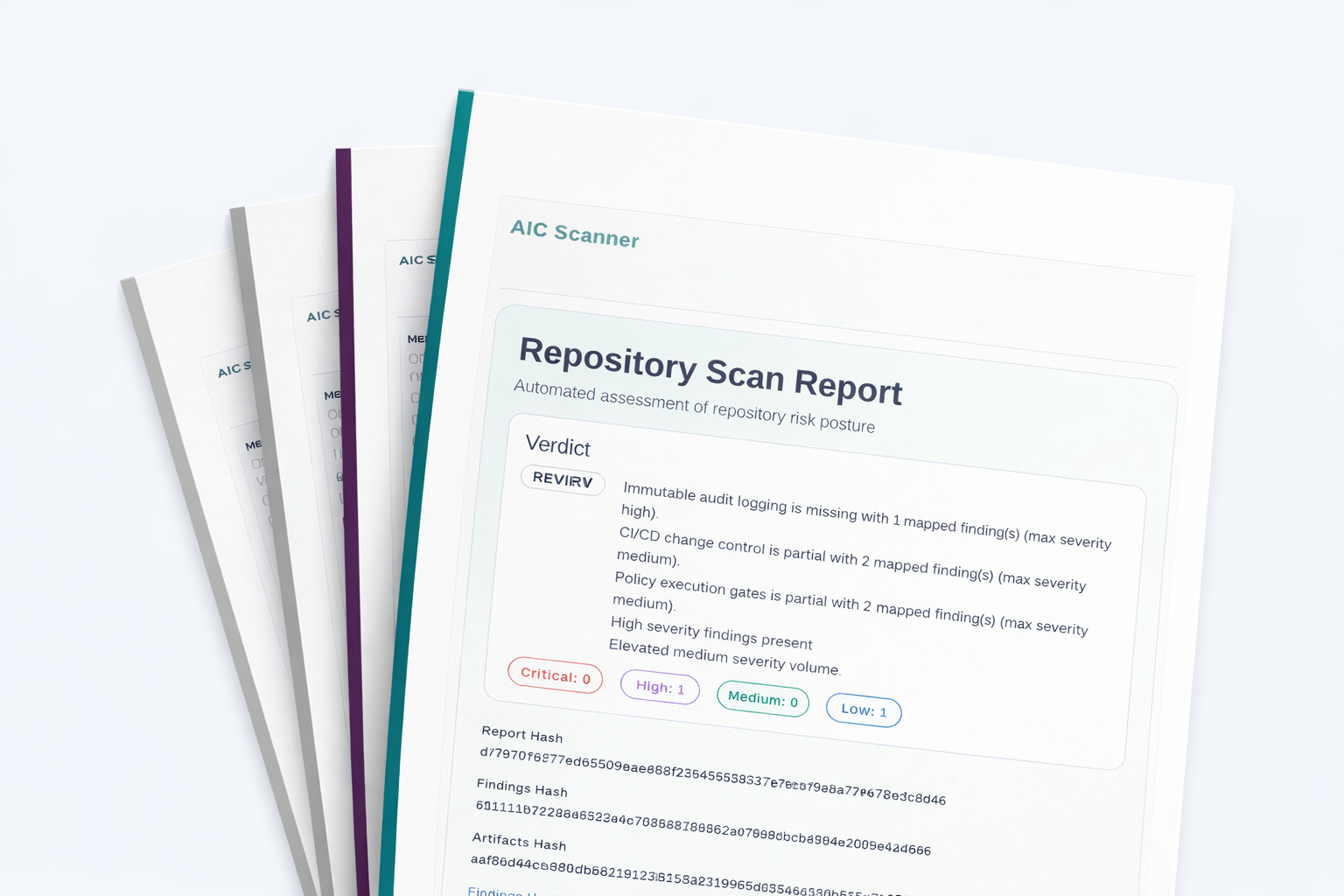

- Produce

Generate explicit evidence and control gap categories.

- Enforce

Apply runtime controls only after posture is defined.